The Future of Human Work Might Just Be Pressing Enter for AI

Time may be running out for humans.

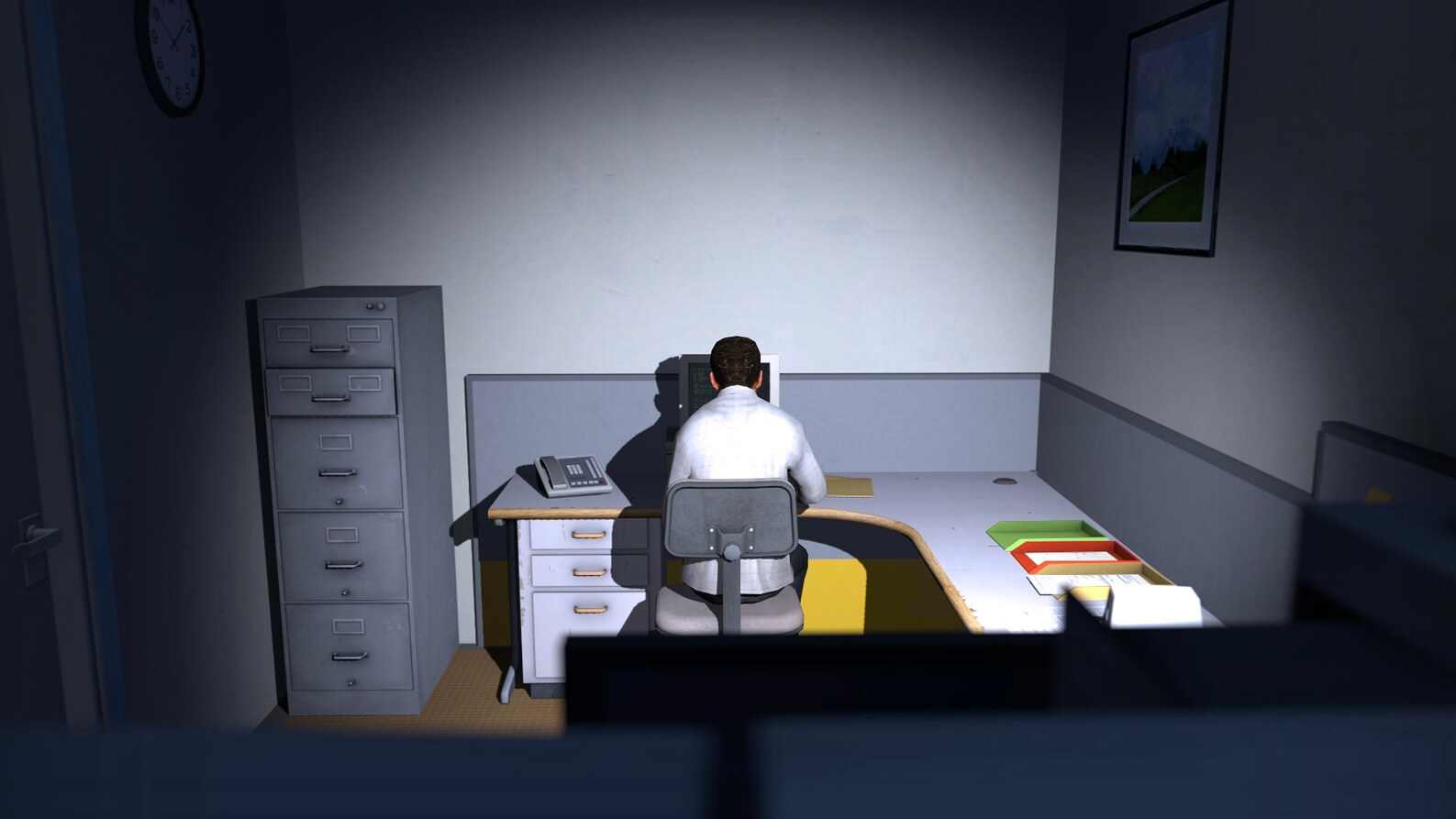

Cover image: The Stanley Parable. Its protagonist, Stanley, is Employee 427 in an office building, and his daily work consists of repeatedly pressing keys exactly as the computer instructs him to.

First, I want to make one thing clear: this article is entirely based on phenomena I’ve observed recently and the thoughts and reflections that came from them. Every word was typed by hand, and no AI was used in the writing of this article.

As a programmer and machine learning engineer, my daily work naturally revolves around business logic, data, code, and models.

Ever since ChatGPT was released at the end of 2022, various large-model-based coding assistants have brought a lot of convenience to our daily work—but also quite a bit of trouble.

- The convenience is obvious: any code or script I want to write can be generated or assisted by a large model.

- The trouble is equally obvious: large models make mistakes. And when they do, you often have to go back and debug their code.

This contradiction has sparked plenty of debates online. Some people embrace it enthusiastically, while others dismiss it outright. Even Linus himself eventually fell victim to the classic “I changed my mind” moment:

“After criticizing AI, the Linux creator launches his first Vibe Coding project.”

My Experience Using AI for Programming ¶

I’m someone who loves trying new things.

Since the birth of ChatGPT in late 2022, I’ve experimented with many different AI coding tools.

From web-based assistants like ChatGPT, Hailuo AI (now Kimi), Doubao, Qwen, and DeepSeek,

to IDE-based coding assistants such as Cursor and VSCode Copilot,

and later CLI-based tools like Claude Code and Gemini CLI.

I’ve used each of these tools continuously for at least one or two months, and I’ve paid for most of them.

However, everything changed this month after I started using the combination of Codex + GPT-5.4.

My Recent Experience with OpenAI Codex ¶

Since I started using the Codex + GPT-5.4 combination this month, I’ve been enthusiastically recommending it to my friends.

Because it constantly refreshes and overturns my understanding of what AI coding tools can do.

I noticed several things:

- It is extremely good at actively gathering information to understand complex scripts or codebases.

- It excels at integrating large amounts of information from different sources (including web resources and local files) and summarizing them into highly probable decision points.

- When information is insufficient, it knows when to stop, rather than continuing to generate hallucinated answers.

(Once I accidentally left out a key piece of information in a long context. It directly pointed out the missing information, skipped that section, and only completed the parts that had sufficient data.) - Finally, the code changes it makes are very likely to be correct and precise. Even if they aren’t at first, after several rounds of self-correction attempts, it usually manages to fix the issue.

My role in this process is basically:

- First, describe the task in natural language and provide as much prior information as possible.

- Tell it what skills are required to complete the task, let it learn those skills itself, and instruct it that whenever it makes mistakes and fixes them, it should update its skills to avoid repeating them.

- Then keep pressing Enter.

And as I keep pressing Enter, I watch it move forward at incredible speed along the correct path. Even if it occasionally deviates, it quickly corrects itself and records the lessons learned from mistakes to avoid repeating them in the future.

At some point, I started to realize:

It may gradually begin replacing my job.

The Future of Human Work Might Just Be Pressing Enter for AI ¶

After realizing how powerful this “tool” is, I couldn’t sleep well for several nights.

Part of it was excitement—but part of it was also anxiety.

My mind kept running endlessly: what will happen this year? next year? what will the future look like? what will I still be able to do?

Because this productivity boost is not just a few times faster. It’s an order-of-magnitude leap.

I began wondering: maybe I’m just an algorithm engineer working in a relatively narrow field of model training. Perhaps those who actually develop large models themselves might be safe?

My answer is probably: no.

Because AI agents are already beginning to demonstrate stronger coding ability, decision-making capability, and execution speed than human engineers.

If large model engineers rely on those same capabilities to do their work, then fundamentally they are not different from any other engineers.

A recent article seems to support this idea:

“Anthropic on the cover of TIME! Inside sources reveal AI recursive self-improvement could happen within a year.”

In other words, within the next few years, large models may gradually detach from human guidance and begin iterating and evolving on their own.

Just like AlphaGo surpassed human champion Lee Sedol after training through self-play—and the strategies it developed were sometimes incomprehensible to human players—large models may also surpass humans through exponential self-improvement.

They could generate scientific discoveries that humans might struggle to understand, at least initially.

And by that time, humans might truly be left with only one role:

Pressing Enter.